|

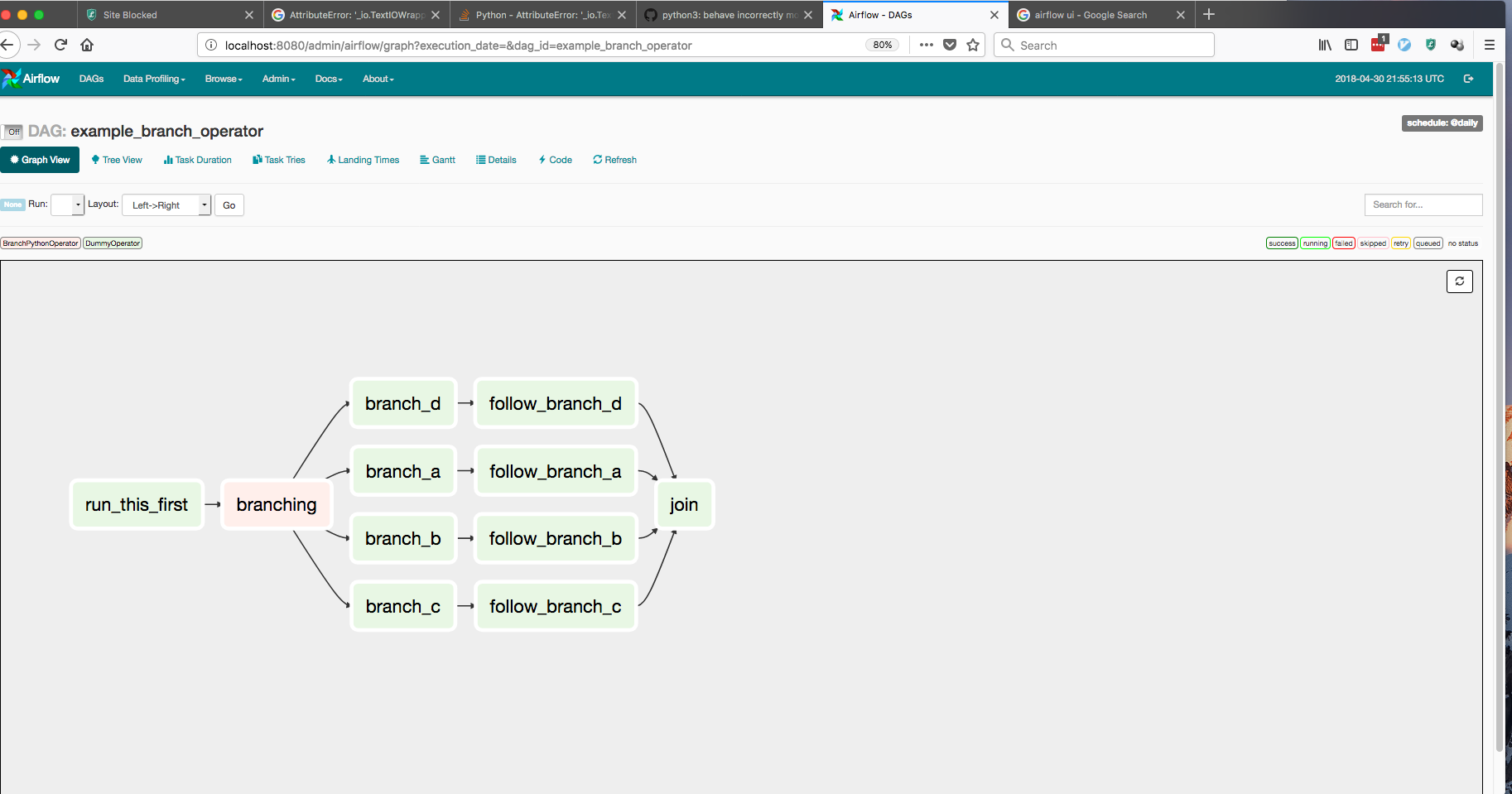

The volumes parameter contains the mapping between the host (“/home/airflow/simple-app”) and the Docker container (“/simple-app”) in order to have an access of the cloned repository and so the SimpleApp.py script. Features Add correct widgets in Docker Hook (28700) Make docker operators always use DockerHook for API calls (28363) Skip DockerOperator task when it. The variable PYSPARK_PYTHON is defined to use Python3 as the default interpreter of PySpark and the variable SPARK_HOME contains the path where the script SimpleApp.py must go to fetch the file README.md. The Apache Airflow community, releases Docker Images which are reference images for Apache Airflow. The main focus is on how to launch the Airflow using an extended image on Docker, construct a DAG with PythonOperator-focused tasks, utilize XComs (a technique. But you can manage/upgrade/remove provider packages separately from the Airflow core. automatically installs the apache-airflow-providers-docker package. Create a file called Dockerfile in the same directory as the docker-compose.yaml file and paste the below lines into it. I run my DAG using the airflow UI, but in the dockercommand task I get this error: Reading local file. The next step is to create a Dockerfile that will allow us to extend our Airflow base image to include Python packages that are not included in the original image (apache/airflow:2.2.5). If a login to a private registry is required prior to pulling the image, a Docker connection needs to be configured in Airflow and the connection ID be provided with the parameter dockerconnid. The original source is: How to use the DockerOperator in Apache Airflow. In this example, the environment variables set are gonna be used by Spark inside the Docker container. All Airflow 2.0 operators are backwards compatible with Airflow 1.10 using the backport provider packages. Im trying to use the docker operator on an airflow pipeline. In this blog post, we set up Apache Spark and Apache Airflow using a Docker container, and in the end, we ran and scheduled Spark jobs using Airflow which. Notice the environment and the volumes parameters in the DockerOperator. The second one is where the DockerOperator is used in order to start a Docker container with Spark and kick off a Spark job using the SimpleApp.py file.īy the way, I’m not gonna explain here what does the BranchPythonOperator and why there is a dummy task, but if you are interested by learning more about Airflow, feel free to check my course right there.

The first one is where the BranchPythonOperator is used in order to select one branch or another according to whether or not the repository exists. 38 from airflow import DAG from _operator import BashOperator from datetime import datetime, timedelta from _operator import DockerOperator default_args =, volumes=, command= '/spark/bin/spark-submit -master local /simple-app/SimpleApp.py', docker_url= 'unix://var/run/docker.sock', network_mode= 'bridge' ) t_git_pull > t_docker

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed